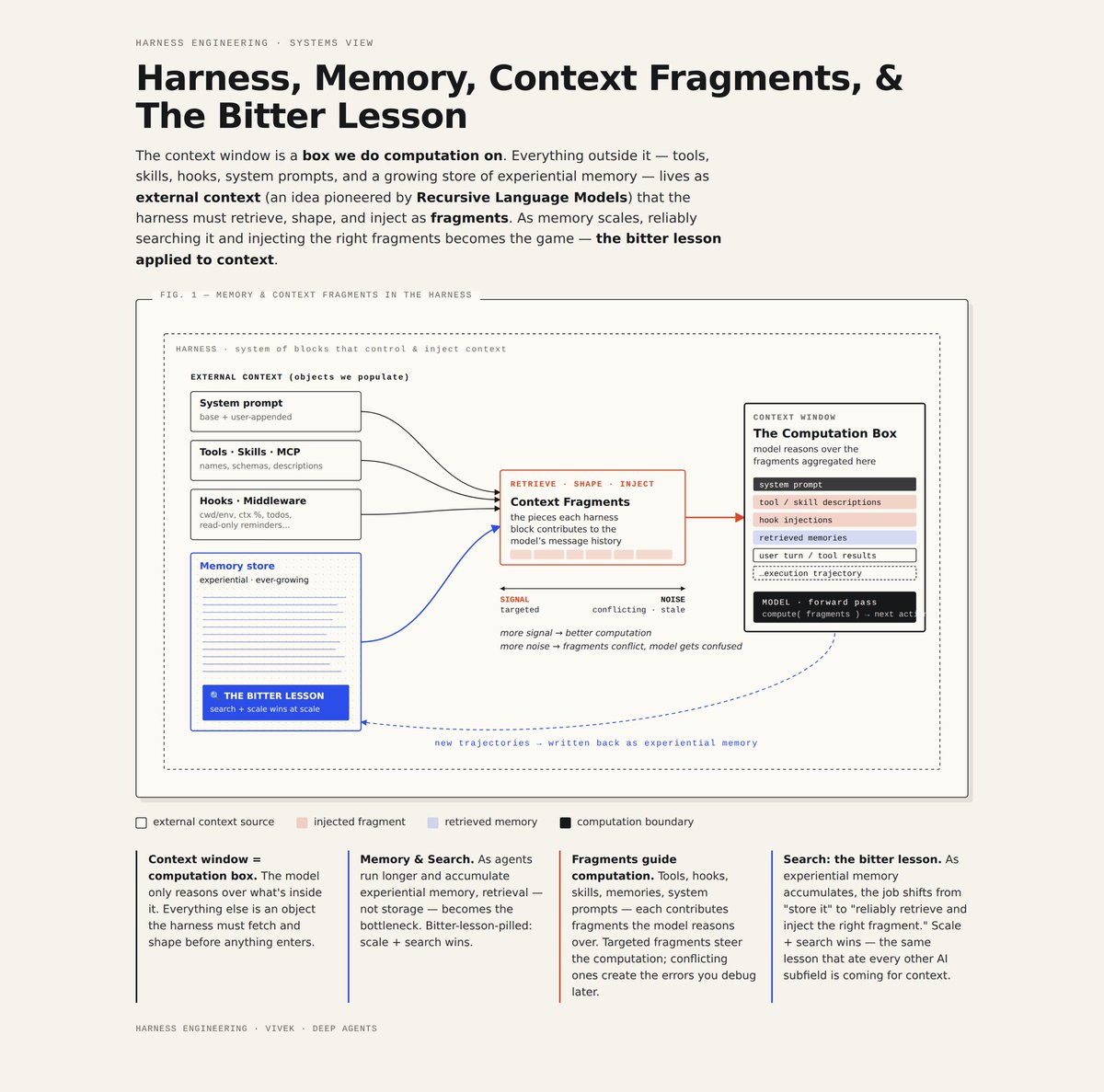

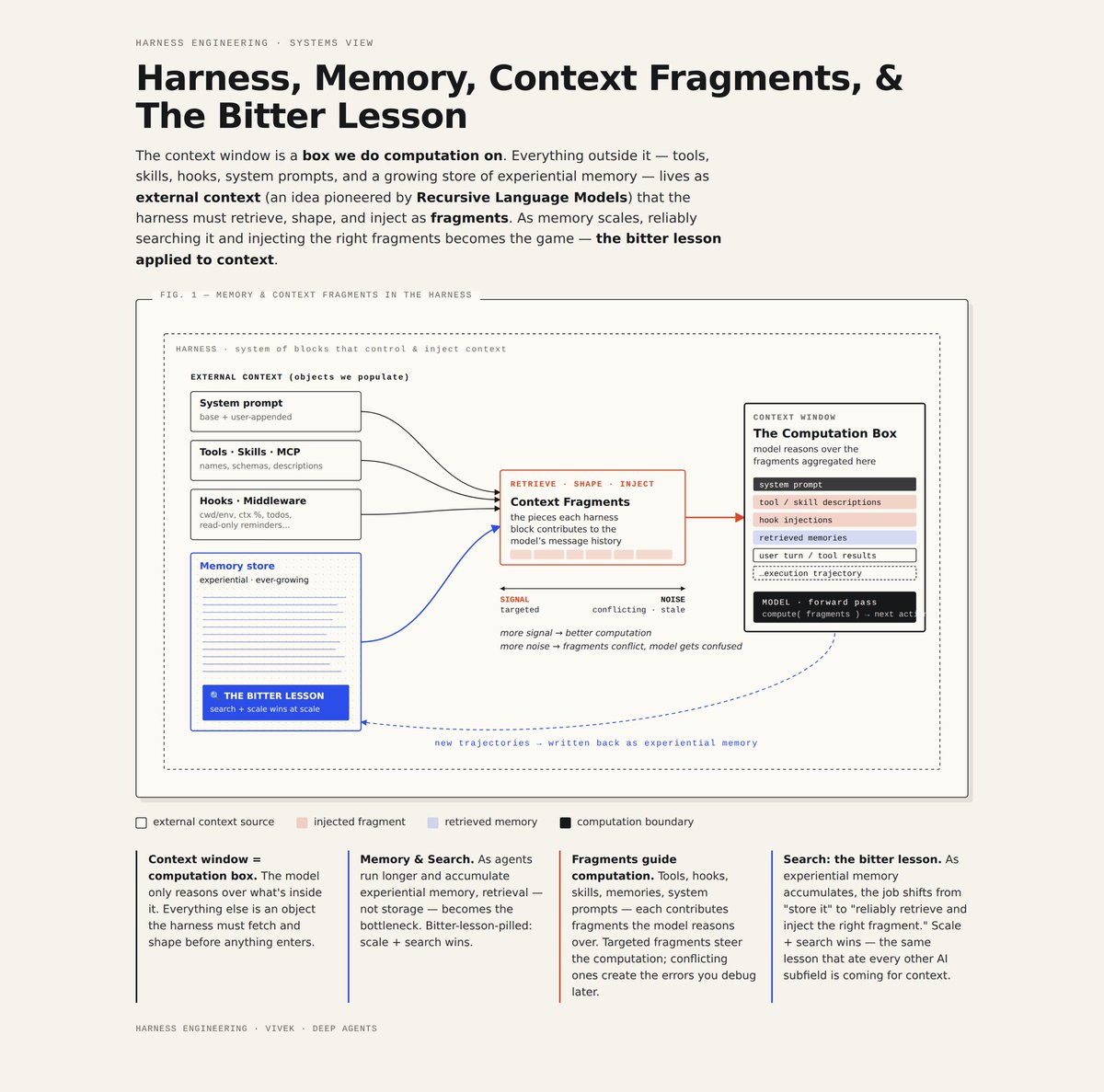

Harness, Memory, Context Fragments, & the Bitter Lesson

this is a work in progress mental dump on interesting intersections between how we use and design a harness, implications for memory being accumulated over long timescales, and the search bitter lesson we can’t escape

this is v30+, HTML diagrams help me iteratively refine + chat to roughly “see” and alter the mental model

Harnesses & Context Fragments:

a very important job of the harness is to efficiently & correctly route data within its boundaries into the context window boundary for computation to happen

the context window is a precious artifact. Harnesses make decisions on how to populate, manage, edit, and organize it so agents can do work. Each loaded object can be thought of as a Context Fragment and represents an explicit decision by the user and harness designer of what needs a model needs to do work at any given time.

many ideas on externalizing objects + loading into the context window are pioneered and very well described by @a1zhang with RLMs

Experiential Memory:

we’re in the very early days of deploying agents and agents produce massive amounts of data in every interaction they have. this is akin to humans doing things and remembering things they did.

however agent memory has a massive advantage as it can be accumulated across all agents which are easily forked and duplicated (unlike humans). @dwarkesh_sp does a good talking about this massive benefit of artificial systems

memory can be treated as an externalized object. the harness is tasked with doing good contextualized retrieval which means pulling in the right data from accumulated memories across all agent interactions

Search & The Bitter Lesson:

As we deploy agents in our world over year timescales, there is going to be a hyper-exponential in the amount of data produced by those agents. We should want to:

1. Own that data for ourselves. Open ecosystems are important here

2. Use that data

This means that we’ll have to search over, distill, and organize massive amounts of data. Our brain is exceptional at doing this. Both contextually using prior experience and mostly committing the right stuff to memory with enough intentional practice.

Our current infrastructure systems and algorithms will be put to the test and often break as we get used to this new data regime

some open questions:

- how do we efficiently distill experiences (Traces) into higher level memory primitives that capture the important parts? How do we do this over ultra long time horizons?

-

How much of the future is Search just-in-time vs Search that gets integrated into model weights?

-

How do we make models much better at self-managing their context window? How do we reduce error rates in recursively allowing agents to operate over external objects?

i’ll be expanding on, altering, and adjusting these mental models but these feel like an important subset to me on the future of designing agents practically